To use this site, please enable javascript

To use this site, please enable javascript

Ever since EIVA established a dedicated AI software development team in 2017, the team has been sailing forward with powerful technological advancements in its’ sails. This has resulted in a long list of cutting-edge capabilities with applications such as autonomous navigation, automatic eventing and real-time quality control (QC).

At the same time, AI hardware technology advances fast too! We recently upgraded our AI onboard computer, which is one of two computers we use for onboard processing on vehicles such as AUVs (the other is for data processing workflows all the way from acquisition to QC). By integrating our advanced AI software solutions with the latest hardware platform, we unlock the software’s full potential onboard AUVS.

NVIDIA’s Xavier, the new onboard computer used for EIVA’s AI software tools, brings a wave of benefits to autonomous operations. With Xavier onboard, users can run several AI-based tasks simultaneously, as well as running them faster than on previous boards. This lets users take advantage of the ever-growing list of AI capabilities in NaviSuite – improving possibilities for advanced onboard processing and thereby autonomous operations.

We have gathered all our AI software features on this new onboard computer. This means a single board gives users the possibility to use a variety of tools supporting autonomous operations through automated eventing, QC and navigation-aiding. It also means it’s easier to integrate these advanced software features into your vehicle.

Dive into EIVA’s tools supporting autonomous operations onboard AUVs…

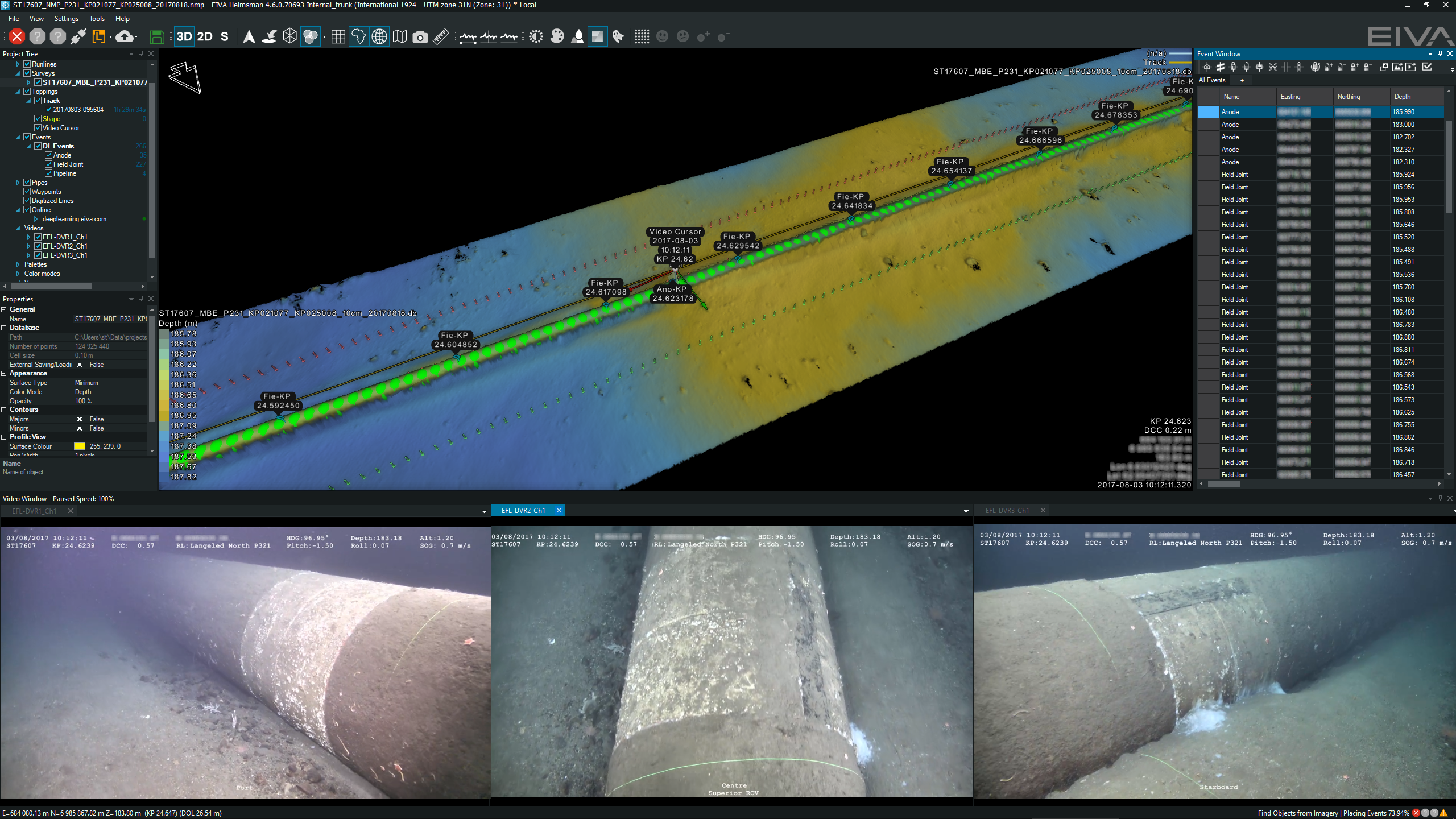

Automatically registered and categorised events are shown in several views – in a list (upper right) and on a DTM (upper middle)

An automatic eventing tool for object detection, NaviSuite Deep Learning detects, classifies and registers observations in real time. This means that you can send out an AUV equipped with the AI payload board and NaviSuite Deep Learning, and when you recover it, you have a list of events ready – leading to significant time-savings.

This eventing tool also enables retasking of the AUV based on what it observes. For example, it can register critical observations, such as damage, while the AUV is at the site of the damage. This can then immediately be used to retask the AUV, rather than waiting until it has been recovered and the data processed, then sending it out again with a new mission.

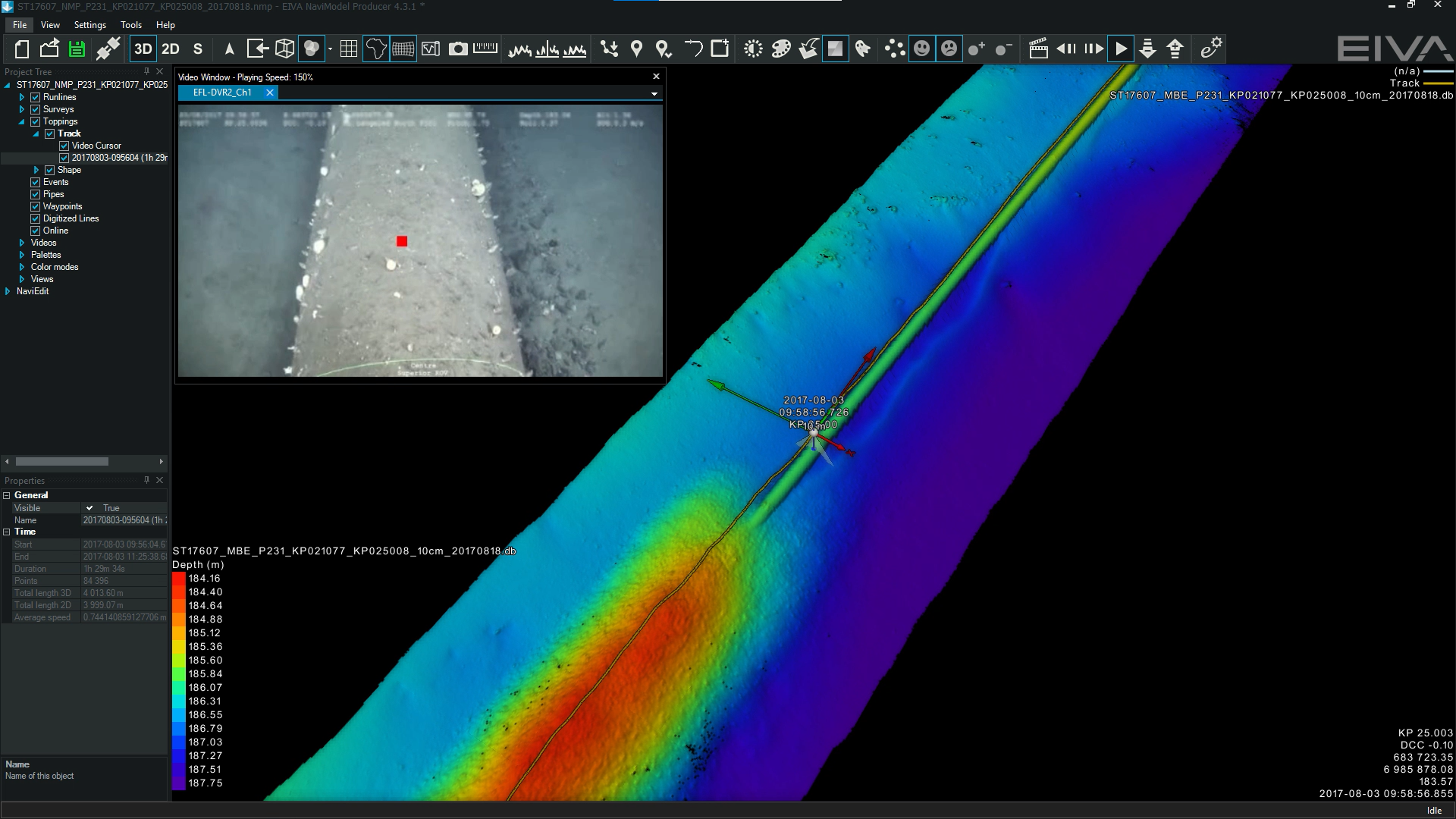

The visual pipetracker navigation method using the camera feed to follow the pipe

The visual pipetracker navigation method provides AUVs with better navigation and camera data QC during missions. Essentially, it tracks the centre of a pipe based on camera, sonar or laser scanner data. This tracked pipe location can then be used to ensure that the AUV follows the pipe rather than another object or an outdated map.

When using camera data, the pipetracker method also works as a quality check of the images the AUV is collecting. This is because the method needs a clear view of the pipe to calculate the centre, so it can immediately register if the pipe is not properly visible in the camera view – based on this information, the AUV can be retasked. By regularly checking the quality of the camera data and retasking based on any interruptions, it minimises the risk of gathering unusable image data during an AUV mission, helping to save time.

In addition to tools like these for autonomous pipeline inspections, EIVA’s software development crew is working on tools for autonomy in many areas, such as eventing coral habitats and inspecting mussel farms. Dive into more ways we are using AI to enable autonomous operations.

Stay tuned and follow the EIVA crew for more news. If you have any questions, feel free to reach out to the EIVA crew.